Much of the data used to justify the welfare card is flawed

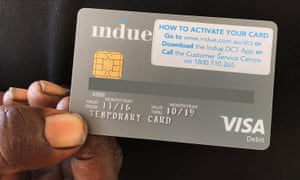

Figure: My criticism [...] includes the user questionnaire design, its length, the order of questions, the language and shape of some questions, and importantly, the probable contamination of responses.’ Photograph: Melissa Davey for the Guardian

The headline in The Australian was “Grog abuse drops under welfare card”. Human services minister Alan Tudge is reported as saying “There are very few other initiatives that have had such impact”. The box of results, headed “A better life”, quoted results including “41% of drinkers reported drinking alcohol less frequently” as did 37% of binge drinkers, along with 48% of gamblers and drug takers.

These statistics form the basis of the government’s case for extending the program and increasing the sites. The other support for the success of the trials come from some qualitative interviews mainly with local leaders, often white, who supported the introduction of the trials and are not neutral observers.

On the same day there was a media release from prime minister MalcolmTurnbull and minister Tudge claiming the final independent evaluation of the trials of the card showed that it had “considerable positive impact” in the communities in which it operated, in particular in reducing alcohol, drug use and gambling. However, the data quoted is much less valid and reliable than official views claim.

These are the most recent moves in welfare policy to ever more restrictive conditional payments. The current adding of areas is being justified by “trials” of the cashless debit card, proposed and strongly supported by Andrew Forrest and his Minderoo Foundation. However, the media above and wider reporting singularly fail to question the validity of the research findings and therefore the legitimacy of some drastic and expensive changes to welfare policy. Indications from government funded evaluations in 2014 of similar cash control trials in the NT were negative, reporting failure of similar desired positive outcomes. These reports, including the present one, are available on various pages of the Department of Social Services evaluation sites.

At best, the current evaluation of these trials could offer some possible indications that those over-dependent on addictive substances could benefit from some restriction of their access to cash. The results do not, however, support claims restricting all local welfare recipients’ access to cash. Extending the trials and seeking to expand the locations, as now announced, is not justifiable on the basis of the “evidence” offered in the report. Much of the data collected from participants, if carefully examined, is flawed and some of the more qualitative responses reported are also questionable.

As a social policy advocate, I am not a supporter of highly conditional welfare because there is little evidence it works. However, as a sociologist, I examine the evidence carefully. There are serious flaws in data collection, described in the Orima report. Some of the limits are acknowledged in the report, but not by the government. My comments mainly cover wave two data as these are the results most quoted by the government to justify their expansion.

What are the disputed points?

My criticism is wider than those in the report, as it includes the user questionnaire design, its length, the order of questions, the language and shape of some questions, and importantly, the probable contamination of responses. Preliminary information, read from the tablet used to record answers, includes promises of a gift card, $30 or $50 on completion. Paying respondents affects relationships with interviewer and answers. The next step is asking for respondents’ ID. This is to avoid duplication, but, as this is an official government survey, the reassurance of confidentiality may not be believed and affect responses. Given Indigenous anxieties about authority, and welfare, they are likely to give acceptable answers. It is also not clear if the interviews were private or in the presence of others, which may also affect answers. The above effects on the data collected are likely to be serious and undermine the legitimacy of responses.

The above difficulties raise doubts on the validity and reliability of the responses collected and how far these represented what respondents thought they ought to say, rather than what they did or felt. There are more problems in the questions asked as detailed below.There are also sampling problems, based on passersby in public places, on the representativeness of those interviewed. This raises issues of who refused and was not sampled. Results were apparently weighted, but on what basis? What was the “universe” used and did it allow for possible differences in responses of the over 20% of refusals, and those not likely to be in those locations?

The questionnaire designs problems

These comments relate to wave two of the survey in 2017 of 479 participants, which is identified as the data sources of quoted changes to alcohol and drug use as well as gambling and associated problems. There are many problems with the structures of the questionnaire and the actual questions. Firstly, starting with lots of complex questions on demographics is not likely to engage the interest of the respondent and set up an equitable relationship. The boring bits should be left till last and include the personal ones that make people uncomfortable.

Secondly, there are serious issues in the design of the crucial questions which most of the government’s claims of efficacy are based. They follow complex questions on family, location and households, as well as knowledge of the card and other administrative questions. Section C starts with a statement to be read to respondents on the content, which, it says, will “cover personal things, including your money situation, how much you gamble, how much alcohol you drink, whether you take drugs, whether you have been arrested, beaten up or robbed and how safe you feel in your community”. This list would cause most of us, let alone already disadvantaged people, some serious anxieties and concerns.

The questions cover details of possible problems such as running out of money, food, unable to meet needs of children and their frequency rates over the past three months, with no positive options. These are followed by similar questions on personal use of and spending on alcohol, drugs and gambling, followed by whether they are looking for a job, and their support for school age children, if present. There are questions on whether they are proud or ashamed of their community and whether they feel safe. These are questions likely to cause distress and discomfort.

There are serious ethical questions on these types of questions. Many of the answers could have legal implications and risk child abuse interventions. These interviews were also not necessarily private, nor administered by people with skills in dealing with drug and alcohol issues. The responses are most likely to reflect respondents’ desire to give the “right” answers that do not get them into trouble, rather than any valid record of their behaviour.

The next questions seek details of their awareness and use of local drug and alcohol services and financial support ones, and are quite complex. Answers again are likely to be skewed by the above concerns.

The last section finally gets to “Opinions of the Impact of the Debit Card Trials”. It asks a series of questions on community benefits, putting words in their mouths. It then asks whether they had reduced their earlier list of possible sins. By then I would suspect all responses would be confused or fearful and grossly inaccurate.

We need solid data to justify major changes

The above details suggest that most responses to the survey should be seen as seriously flawed. These are the main sources of data that are being used to justify the continuation and expansion of the program. The report claims triangulation of data and uses qualitative research with a range of interested parties as confirming these findings. Unfortunately, these interviews were not fully recorded but notes taken and then collectively interpreted. The results are therefore also questionable.

The use of official data as the third source is also questionable as it is mostly general data that does not apply specifically to the cashless debit card recipients. It therefore, at best, indicates some changes that might apply to card users. Most trials have some initial effects, the Hawthorne factor, but these usually wear off, so good policy needs very solid data to justify major changes, particularly in areas that deeply affect people’s lives.

There are signs elsewhere that changing demands for labour and other technical advances are raising questions on whether social welfare programs need radical reviews to make them less conditional and more flexible. These potential directions means that these changes need a high standard of proof to justify their costs and directions.